Sony's groundbreaking patent aims to enhance accessibility for deaf gamers by introducing real-time in-game sign language translation. This innovative technology bridges communication gaps, allowing seamless interaction between players using different sign languages.

Sony's groundbreaking patent aims to enhance accessibility for deaf gamers by introducing real-time in-game sign language translation. This innovative technology bridges communication gaps, allowing seamless interaction between players using different sign languages.

Sony Patents Real-Time Sign Language Translation for Video Games

Leveraging VR and Cloud Gaming Technologies

This patent, titled "TRANSLATION OF SIGN LANGUAGE IN A VIRTUAL ENVIRONMENT," details a system enabling real-time translation between sign languages, such as American Sign Language (ASL) and Japanese Sign Language (JSL). The goal is to facilitate in-game communication for deaf gamers.

This patent, titled "TRANSLATION OF SIGN LANGUAGE IN A VIRTUAL ENVIRONMENT," details a system enabling real-time translation between sign languages, such as American Sign Language (ASL) and Japanese Sign Language (JSL). The goal is to facilitate in-game communication for deaf gamers.

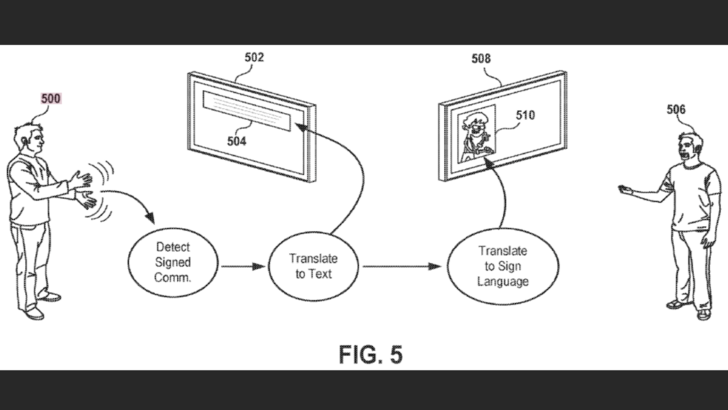

The proposed technology uses on-screen virtual indicators or avatars to display translated sign language in real-time. The process involves a three-step translation: sign gestures are first converted to text, then translated into the target language, and finally rendered as sign gestures in the target sign language.

As Sony explains in the patent: "Implementations...relate to methods and systems for capturing sign language...and translating...to another user...Because sign languages vary...this provides a need for appropriately capturing...and generating new sign language as output..."

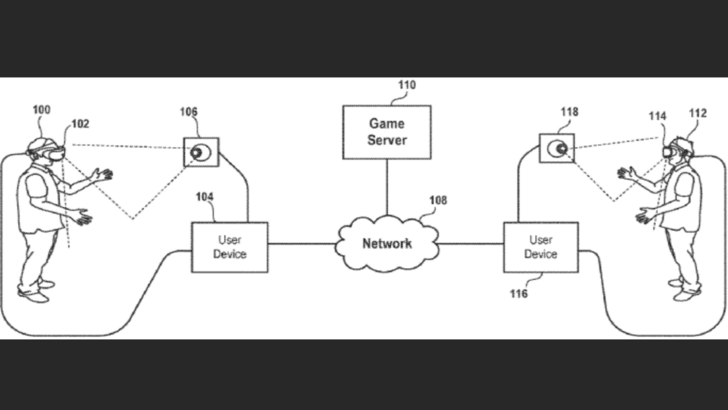

Sony envisions implementing this system using VR headsets or head-mounted displays (HMDs). These HMDs would connect to a user device (PC, game console, etc.), providing an immersive virtual environment.

Sony envisions implementing this system using VR headsets or head-mounted displays (HMDs). These HMDs would connect to a user device (PC, game console, etc.), providing an immersive virtual environment.

Furthermore, Sony proposes a networked system where user devices communicate with each other and a game server. This server manages the game's state, ensuring synchronization across all players. The patent also suggests integration with cloud gaming systems, enabling seamless streaming and rendering of the game.

This architecture allows for shared gameplay and interaction within the same virtual environment, facilitated by the real-time sign language translation capabilities. The potential for enhanced inclusivity in gaming is significant.